The track involves a qualification phase in which teams need to perform the handover configurations of the CORSMAL Benchmark protocol remotely in their laboratory (see also the open access publication). Results will be submitted to the track organisers and teams will be ranked on a leaderboard in this page based on the performance scores of the benchmarking protocol.

Below we also provide details, guidelines and instructions, and documentation for preparing the solution, including reference software, publications, and data.

The CORSMAL Containers Manipulation dataset can be used to design perception-based solutions for the approaching phase and prior to the handover of the object to a robot.

The following teams have qualified for the on-site competition in Vienna in June 2026 based on their commitment, performance in the qualification phase, robot availability and requests, space available in Vienna, and other factors. The list is in alphabetic order.

- 3D Vision & Robotics Lab (UR5 request)

- AIRLab (UR5 request)

- Broken Arm (own robot)

- IITGN Robotics Lab (Franka request)

- LARICS Grippers (own robot)

- Robot Booster (own robot)

- Shakey's Legacy (Franka request, or own robot)

- SIRSIIT (Franka request)

- Youth2Real (own robot)

Teams are ranked by the benchmark score.

The offline score s9 (human intention/hand pose prediction) has not been considered for the ranking as it is not a requirment for the qualification phase, but it is still computed and reported for reference and future comparisons. The maximum benchmark score is 88.89. The maximum score for the robotic subsystem is 66.67.

We provide the scores obtained by the CORSMAL baseline (see article in IEEE Robotics and Automation Letters, 2020) only for references, as the computation of various scores has been revised and updated for the qualification.

Benchmark scoresResults are also grouped by vision subsystem, robotic subsystem, and completion of the overall task.

Notes on results and scoring:

- SIRSIIT and AIRLab (returning teams from previous years) submitted results only for the task scores (s10, s11, s12, s13).

- Robot Booster uses prior information of the content density to compute the mass of the containers and content.

- 3D Vision & Robotics Lab use a rule-based approach for vision-based mass estimation based on prior knowledge of the physical properties of the cups (empty-container weight, and container height). We provide a second entry that does not account for the mass estiimation.

- IITGN Robotics Lab completed only 216 configurations: the target delivery location was set for each configuration, but the score is computed with respect to a fixed one due to missing reporting. Timings were not correctly reported.

- All teams but SIRSIIT use alternative cups and scores are relative to the measurements provided by the used cups.

- Broken Arm, LARICS Gripsters, Robot Booster, Shakey's Legacy, 3D Vision & Robotics Lab, IITGN Robotics Lab, and Youth2Real provided results for end-effector reachability (score s8)

- s2: score for vision-based estimation of the container width at the bottom (mm)

- s3: score for vision-based estimation of the container height (mm)

- s4: score for vision-based estimation of the container mass (container + filling)

- s5: score for vision-based estimation of the container fullness (%)

- s6: score for robot-based estimation of the container mass (container + filling)

- s7: offline score for robot-based estimation of the human intention or human hand pose trajectory (not computed)

- s8: offline score for robot-based estimation of end-effector reachability

- s9: score for delivery location accuracy

- s10: score for final mass estimation (container + filling) to assess spilling

- s11: score for the human maneuvring time

- s12: score for the handover time

- s13: score for the robot maneuvring time

- C2: Cup 2

- C3: Cup 3

- C4: Cup 4

- G1: Grasp 1

- G2: Grasp 2

- G3: Grasp 3

- HO-L: handover left location

- HO-C: handover centre location

- HO-R: handover right location

The benchmark protocol includes 4 cups with different dimensions and materials. If teams do not have access to the exact sames cups (e.g. due to geographical location or broken/outdated purchase links), they can use alternative cups with similar physical properties (e.g. weights, dimensions, materials).

Teams must provide the details of the alternative cups following the template provided below (filled with the benchmark cups information as an example), and submit them to the track organisers for approval before performing the handover configurations. Submitted alternative cups will be checked to asses their similarity within tolerable ranges of the physical properties.

Images of the cups must also be provided.

Checklist- Alternative cups details using the JSON template

- Images of the alternative cups (top, side, bottom, empty, filled) next to a coin and at a fixed distance from the camera

Teams will submit their results by email to the track organisers using the information below. The package of files and links must be submitted by April 16. Qualification will be based on this submission. Teams will be able to updated their submission after the deadline to further prepare for the competition in Vienna if qualified, or to update their results on the leaderboard to share their performance with the community and for future comparisons to use in publications.

Checklist- Metadata JSON form filled with all required information (team information, assumptions, ethics compliance, optional demographics, video links, robot reachability, target delivery location)

- Submission CSV form filled with all required information (measurements for each configuration)

- CSV file for offline score s8, named s8_submission_TEAM_NAME.csv and containing all 18 pose records (3 reps x 6 poses), positions in millimeters, and motion times in milliseconds

- Anonymised video links accessible to the organisers (no identifying people present; one or more videos can be shared grouping configurations)

- Optional logs (e.g. joint-state, intermediate measurements) and calibration files (highly recommended to save and store them for reproducibility, especially for every change occuring across configurations)

Notes:

- The offline score s7 will not be computed (no need to submit anything).

- The targeted delivery location (same for all configurations) must be reported in the metadata JSON form

- The submission CSV form must use comma separators, must not containd additional columns or renamed headers, and must not contain any missing values (e.g. empty cells or 'NA'). Please follow the metadocumentation for the values to be reported.

- Handover configurarions can be executed across multiple days. We would expect that configurations executed by a volunteer will be performed in the same session (same date and time slot). Detailed logs indicating the date and time of each recorded configuration can be provided but are optional.

- Repeating a configuration if the handover fails is allowed. Please report failed and repeated cases in a separate file and show also the recording.

- For the videos, providing a caption of the executed configuration and timing would be useful. Please include some short documentation for the video structure and continuity in case of multiple files.

- Check the Ethics policy, Safe recording practices, Demographics, and Data retention policy before performing the configurations with participants, and for the preparation of the videos and metadata information to be reported.

- Detailed information about the offline score s8 are available here.

- The protocol for s8 would require the gripper orientation to not be fixed, and to be adjusted smoothly during the arm motion to point towards the central pose (0, r/2, 0) at each final pose. Teams are not be penalized if the end effector orientation is ignored for the reporting. We will use these measurements to ensure the score is fully computed for the future and we can observe differences in the score

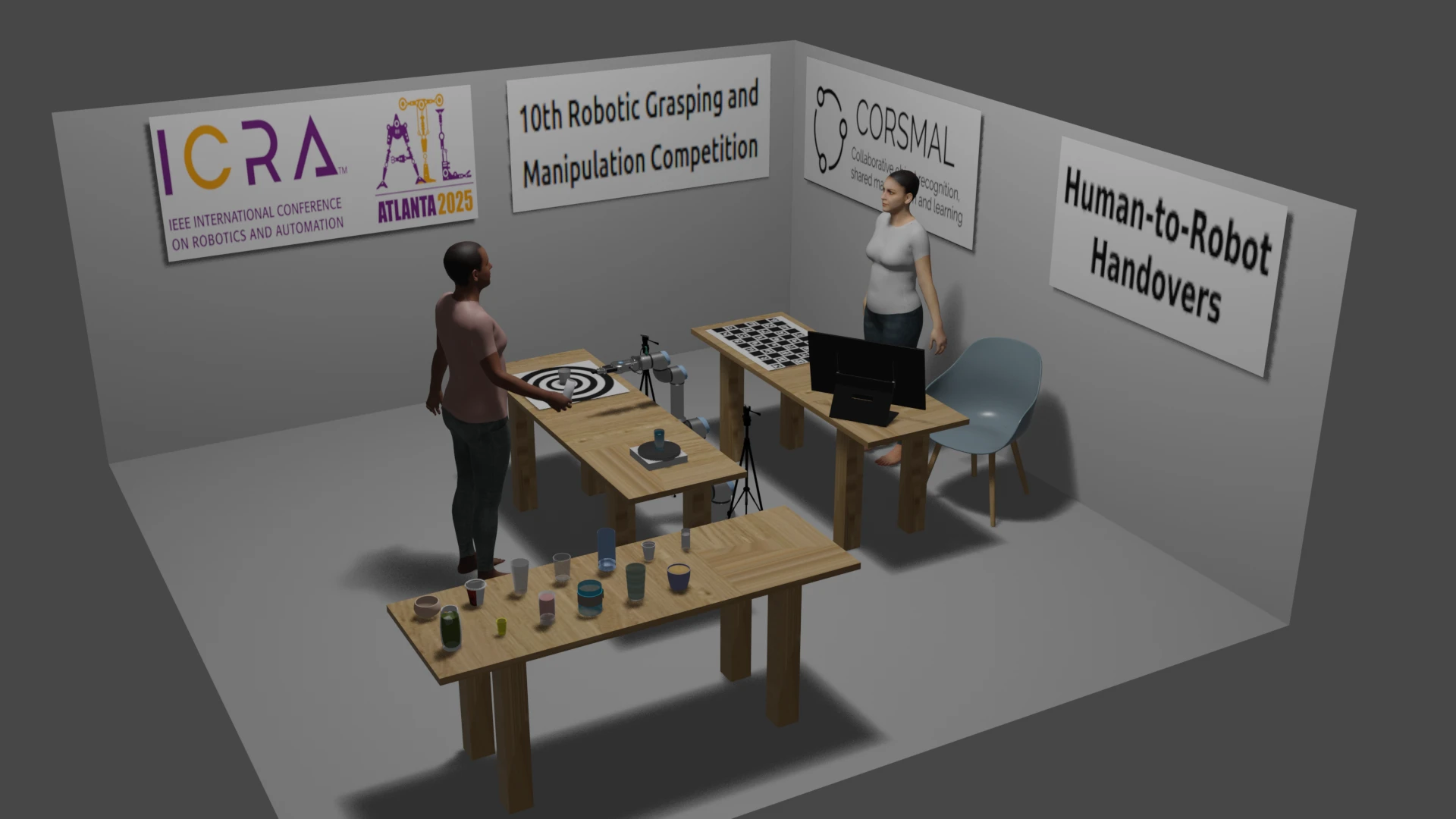

The setup includes a robotic arm with at least 6 degrees of freedom (e.g., UR5, KUKA) and equipped with a 2-finger parallel gripper (e.g., Robotiq 2F-85); a table where the handover is happening as well as where the robot is placed; selected containers and contents; up to two cameras (e.g., Intel RealSense D435i); and a digital scale to weigh the container. The table is covered by a white table-cloth. The two cameras should be placed at 40 cm from the robotic arm, e.g. using tripods, and oriented in such a way that they both view the centre of the table. The illustration below represents the layout in 3D of the setup within a space of 4.5 x 4.5 meters.

- Teams must prepare the sensing setup such that the cameras are synchronised, calibrated and localised with respect to a calibration board. We recommend the cameras recording RGB sequences at 30 Hz with a resolution of 1280 × 720 pixels (based on the setup used in the CORSMAL Benchmark).

- Teams should verify the behaviour of the robotic arm prior to the execution of the task (e.g., end-effector, speed, kinematics, etc.)

- Teams will prepare all configurations with their corresponding container and filling before starting the task.

- Teams must weigh the mass of the container and content, if any, for each configuration before and after executing the handover to the robot, using a weight scale.

- A volunteer from the team will be the person who will hand the container over to the robot using a random/natural grasp for each configuration.

- Any initial robot pose can be chosen with respect to the environment setup; however, the subject is expected to stand on the opposite side of the table with respect to the robot.

These instructions have been revised from the CORSMAL Human-to-Robot Handover Benchmark document.

- Position cameras so that only the hands, arms, and the objects involved in the handover are visible.

- Avoid capturing faces, torsos, or identifiable body features.

- Use a fixed camera angle whenever possible.

- Use a neutral, non-identifying background (e.g., plain wall, table surface).

- Remove or cover:

- whiteboards

- posters

- screens

- personal items

- documents

- lab identifiers

- Disable audio recording whenever possible.

- If audio is unavoidable, ensure no names, conversations, or identifying information are captured.

- Ensure adequate lighting to clearly see the handover, but avoid shadows that reveal facial features or silhouettes.

- Volunteers should avoid:

- distinctive clothing

- jewellery

- watches

- tattoos

- badges

- lab IDs

- If unavoidable, blur these elements before submission.

- blur any accidental identifying features

- crop frames to exclude faces

- remove audio if it contains speech

- verify the final video before submission

- Videos must be uploaded via a private link (e.g., unlisted YouTube, private cloud link).

- Public links are not allowed unless explicit consent is obtained.

Teams may provide the following for each volunteer:

- age_group: "18-25", "26-35", "36-45", "46+"

- gender: "male", "female", "non-binary", "prefer_not_to_say"

- dominant_hand: "left", "right", "ambidextrous"

- experience_level (with robot systems): "novice", "intermediate", "expert"

Teams must not provide:

- exact age

- ethnicity

- height or weight

- health or disability information

- biometric traits

- occupation or job title

- years of experience

- any free-text that could identify a person

- All demographic fields are optional.

- Volunteers may choose "prefer_not_to_say" for any field.

- Demographic data is stored only in the submission metadata.

- It is not linked to identifiable video content.

Benchmark for human-to-robot handovers of unseen containers with unknown filling

R. Sanchez-Matilla, K. Chatzilygeroudis, K., A. Modas, N.F. Duarte, A., Xompero, A., P. Frossard, A. Billard, A. Cavallaro

IEEE Robotics and Automation Letters, 5(2), pp.1642-1649, 2020

[Open Access]

The CORSMAL benchmark for the prediction of the properties of containers

A. Xompero, S. Donaher, V. Iashin, F. Palermo, G. Solak, C. Coppola, R. Ishikawa, Y. Nagao, R. Hachiuma, Q. Liu, F. Feng, C. Lan, R. H. M. Chan, G. Christmann, J. Song, G. Neeharika, C. K. T. Reddy, D. Jain, B. U. Rehman, A. Cavallaro

IEEE Access, vol. 10, 2022.

[Open Access]

Towards safe human-to-robot handovers of unknown containers

Y. L. Pang, A. Xompero, C. Oh, A. Cavallaro

IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Virtual, 8-12 Aug 2021

[Open Access]

[code]

[webpage]

Stereo Hand-Object Reconstruction for Human-to-Robot Handover

Y. L. Pang, A. Xompero, C. Oh, A. Cavallaro

IEEE Robotics and Automation Letters, 2025

[arxiv]

[code]

[webpage]

The CORSMAL Challenge contains perception solutions for the estimation of the physical properties of manipulated objects prior to a handover to a robot arm.

[challenge]

[paper 1]

[paper 2]

Additional references

[document]

Vision baseline

A vision-based algorithm, part of a larger system, proposed for localising, tracking and estimating the dimensions of a container with a stereo camera.

[paper]

[code]

[webpage]

LoDE

A method that jointly localises container-like objects and estimates their dimensions with a generative 3D sampling model and a multi-view 3D-2D iterative shape fitting, using two wide-baseline, calibrated RGB cameras.

[paper]

[arxiv]

[code]

[webpage]

CORSMAL Containers Manipulation (1.0) [Data set]

A. Xompero, R. Sanchez-Matilla, R. Mazzon, and A. Cavallaro

Queen Mary University of London. https://doi.org/10.17636/101CORSMAL1

[data]

[webpage]